Lexar ARMOR 700 Portable SSD Review: Power-Efficient 2 GBps in an IP66 Package

by Ganesh T S on May 30, 2024 8:00 AM EST- Posted in

- Storage

- SSDs

- Lexar

- DAS

- Silicon Motion

- External SSDs

- YMTC

- Portable SSDs

Performance Benchmarks

Benchmarks such as ATTO and CrystalDiskMark help provide a quick look at the performance of the direct-attached storage device. The results translate to the instantaneous performance numbers that consumers can expect for specific workloads, but do not account for changes in behavior when the unit is subject to long-term conditioning and/or thermal throttling. Yet another use of these synthetic benchmarks is the ability to gather information regarding support for specific storage device features that affect performance.

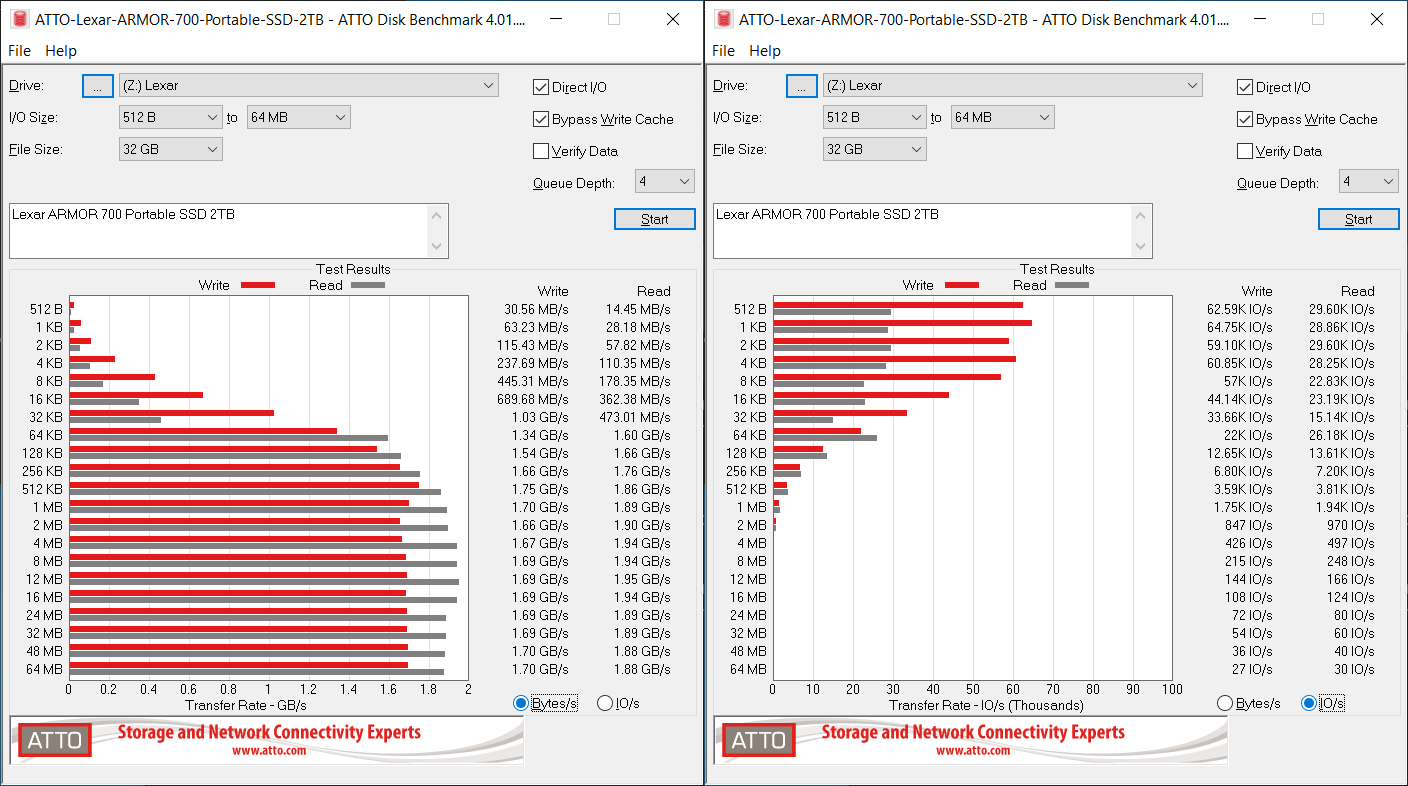

Lexar claims read and write speeds of 2000 MBps, but the ATTO benchmarks below do not back that up. ATTO benchmarking is restricted to a single configuration in terms of queue depth, and is only representative of a small sub-set of real-world workloads. It does allow the visualization of change in transfer rates as the I/O size changes, with optimal write performance being reached around 512 KB for a queue depth of 4.

| ATTO Benchmarks | |

| TOP: | BOTTOM: |

|

|

|

|

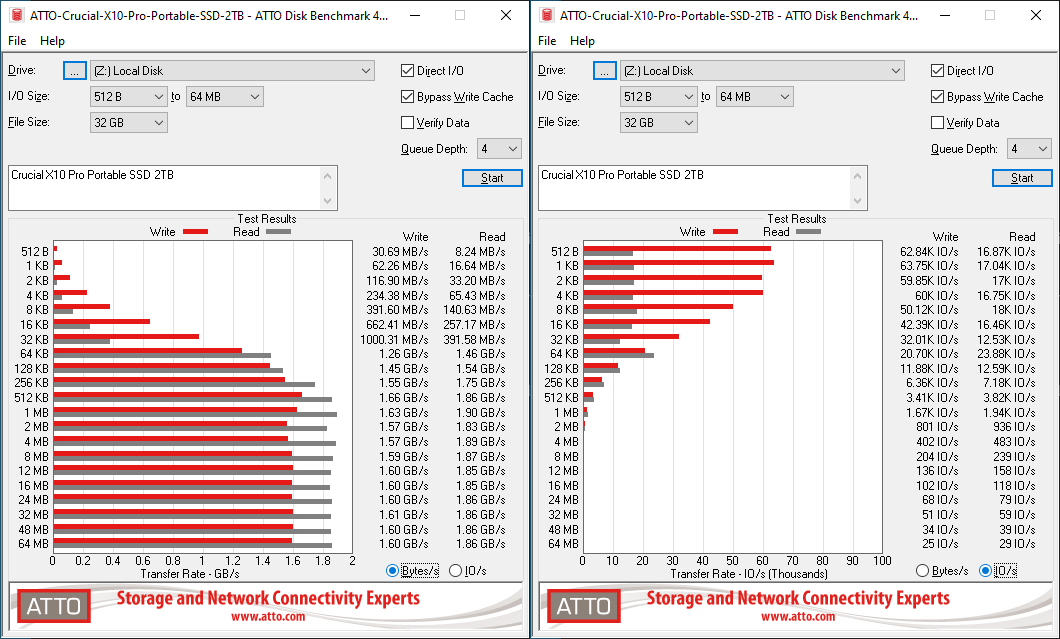

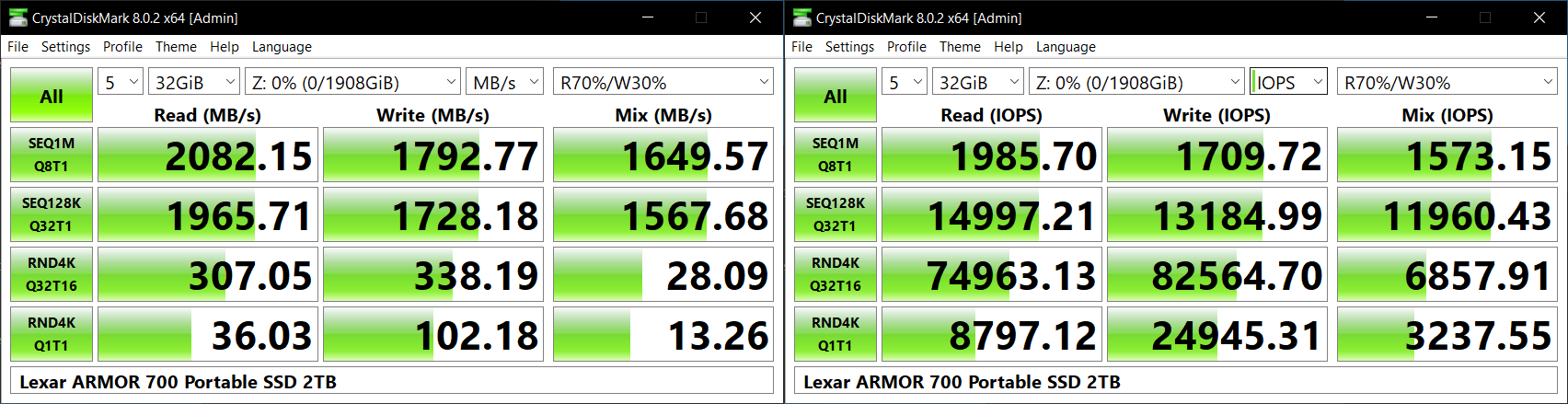

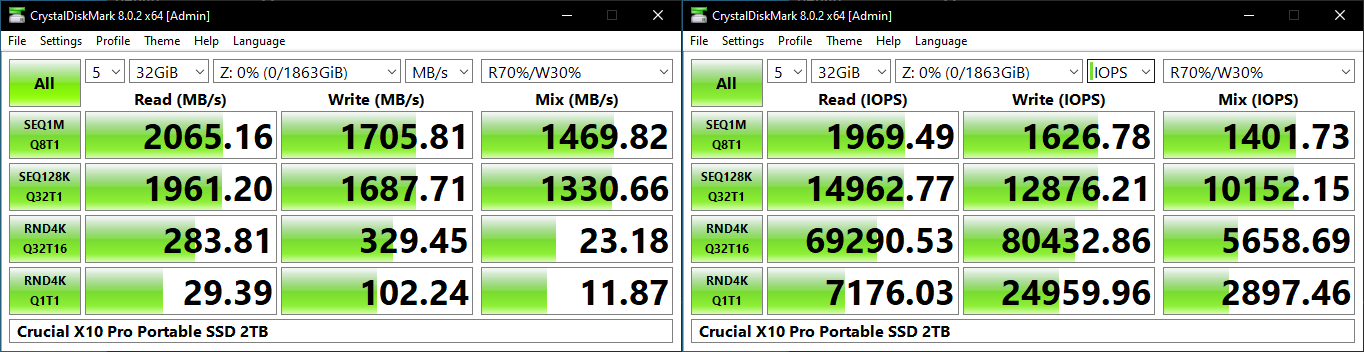

CrystalDiskMark, on the other hand, uses four different access traces for reads and writes over a configurable region size. Two of the traces are sequential accesses, while two are 4K random accesses. Internally, CrystalDiskMark uses the Microsoft DiskSpd storage testing tool. The 'Seq128K Q32T1' sequential traces use 128K block size with a queue depth of 32 from a single thread, while the '4K Q32T16' one does random 4K accesses with the same queue configuration, but from multiple threads. The 'Seq1M' traces use a 1MiB block size. The plain 'Rnd4K' one uses only a single queue and single thread . Comparing the '4K Q32T16' and '4K Q1T1' numbers can quickly tell us whether the storage device supports NCQ (native command queuing) / UASP (USB-attached SCSI protocol). If the numbers for the two access traces are in the same ballpark, NCQ / UASP is not supported. This assumes that the host port / drivers on the PC support UASP.

| CrystalDiskMark Benchmarks | |

| TOP: | BOTTOM: |

|

|

|

|

UASP and NCQ are supported, allowing the PSSD to deliver true SSD-like performance for random accesses. None of these workloads hit the 2000 MBps write speeds claimed by Lexar, but it is evident from the market positioning that the main competitor for the ARMOR 700 is Crucial's X10 Pro. The X10 Pro uses the same native UFD controller from Silicon Motion, albeit with Micron's B47R 176L 3D TLC NAND. The ARMOR 700 consistently outperforms the X10 Pro in different types of CrystalDiskMark workloads within a 32GB span.

AnandTech DAS Suite - Benchmarking for Performance Consistency

Our testing methodology for storage bridges / direct-attached storage units takes into consideration the usual use-case for such devices. The most common usage scenario is transfer of large amounts of photos and videos to and from the unit. Other usage scenarios include the use of the unit as a download or install location for games and importing files directly from it into a multimedia editing program such as Adobe Photoshop. Some users may even opt to boot an OS off an external storage device.

The AnandTech DAS Suite tackles the first use-case. The evaluation involves processing five different workloads:

- AV: Multimedia content with audio and video files totalling 24.03 GB over 1263 files in 109 sub-folders

- Home: Photos and document files totalling 18.86 GB over 7627 files in 382 sub-folders

- BR: Blu-ray folder structure totalling 23.09 GB over 111 files in 10 sub-folders

- ISOs: OS installation files (ISOs) totalling 28.61 GB over 4 files in one folder

- Disk-to-Disk: Addition of 223.32 GB spread over 171 files in 29 sub-folders to the above four workloads (total of 317.91 GB over 9176 files in 535 sub-folders)

Except for the 'Disk-to-Disk' workload, each data set is first placed in a 29GB RAM drive, and a robocopy command is issue to transfer it to the external storage unit (formatted in exFAT for flash-based units, and NTFS for HDD-based units).

robocopy /NP /MIR /NFL /J /NDL /MT:32 $SRC_PATH $DEST_PATH

Upon completion of the transfer (write test), the contents from the unit are read back into the RAM drive (read test) after a 10 second idling interval. This process is repeated three times for each workload. Read and write speeds, as well as the time taken to complete each pass are recorded. Whenever possible, the temperature of the external storage device is recorded during the idling intervals. Bandwidth for each data set is computed as the average of all three passes.

The 'Disk-to-Disk' workload involves a similar process, but with one iteration only. The data is copied to the external unit from the CPU-attached NVMe drive, and then copied back to the internal drive. It does include more amount of continuous data transfer in a single direction, as data that doesn't fit in the RAM drive is also part of the workload set.

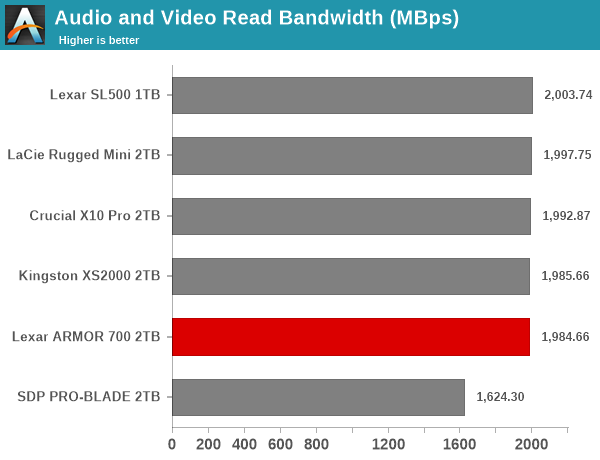

It can be seen that there is no significant gulf in the numbers between the different units for read-heavy workloads. However, for pure writes and mixed workloads, the ARMOR 700 finds itself in the middle of the pack. For all practical purposes, the casual user will notice no difference between them in the course of normal usage. However, power users may want to dig deeper to understand the limits of each device. To address this concern, we also instrumented our evaluation scheme for determining performance consistency.

Performance Consistency

Aspects influencing the performance consistency include SLC caching and thermal throttling / firmware caps on access rates to avoid overheating. This is important for power users, as the last thing that they want to see when copying over 100s of GB of data is the transfer rate going down to USB 2.0 speeds.

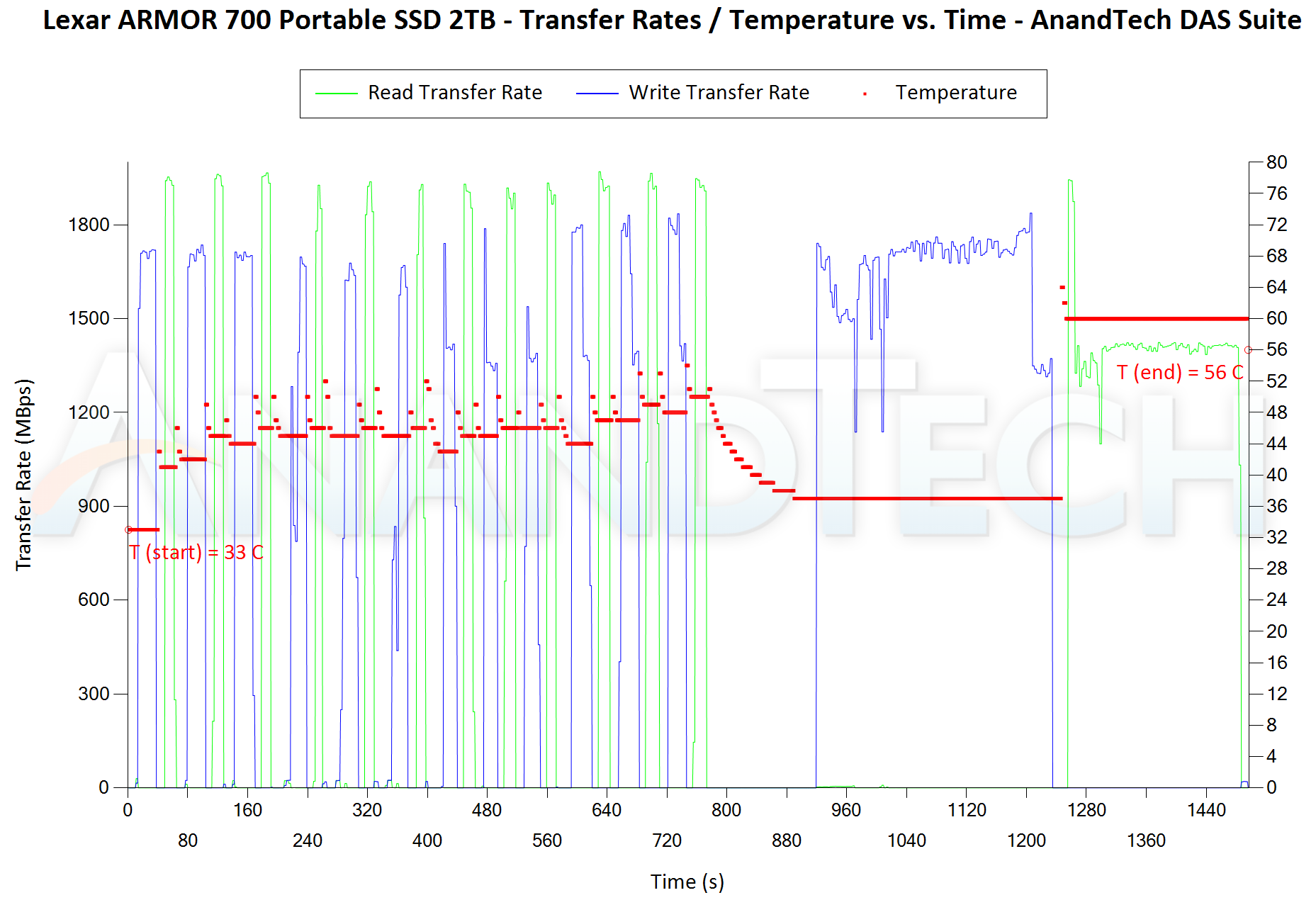

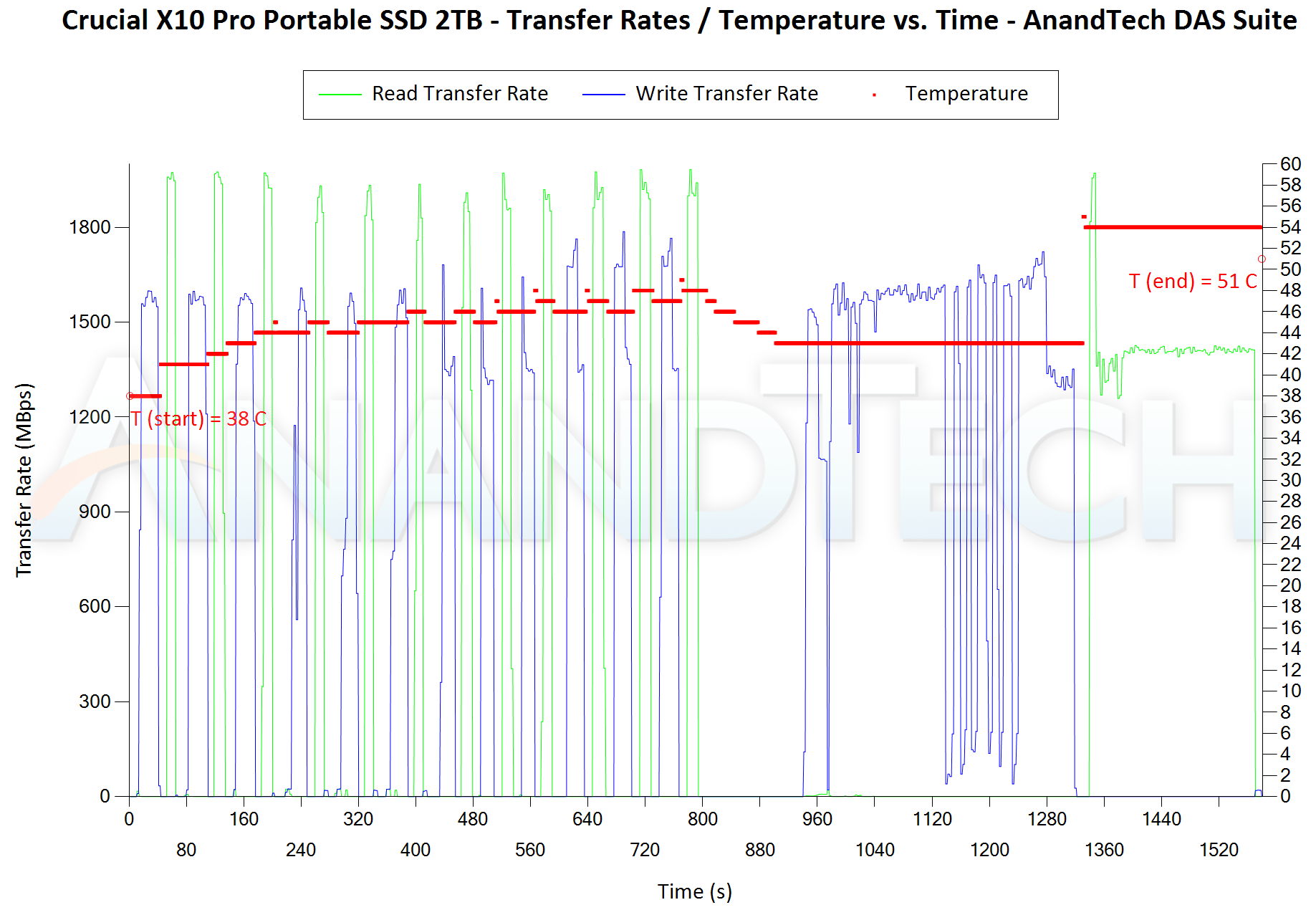

In addition to tracking the instantaneous read and write speeds of the DAS when processing the AnandTech DAS Suite, the temperature of the drive was also recorded. In earlier reviews, we used to track the temperature all through. However, we have observed that SMART read-outs for the temperature in NVMe SSDs using USB 3.2 Gen 2 bridge chips end up negatively affecting the actual transfer rates. To avoid this problem, we have restricted ourselves to recording the temperature only during the idling intervals. The graphs below present the recorded data.

| AnandTech DAS Suite - Performance Consistency | |

| TOP: | BOTTOM: |

|

|

|

|

The first three sets of writes and reads correspond to the AV suite. A small gap (for the transfer of the video suite from the internal SSD to the RAM drive) is followed by three sets for the Home suite. Another small RAM-drive transfer gap is followed by three sets for the Blu-ray folder. This is followed up with the large-sized ISO files set. Finally, we have the single disk-to-disk transfer set. The ARMOR 700 exhibits remarkable consistency across all of the RAM drive transfer sets. In the disk-to-disk test, the performance profile is surpassed only by the LaCie Rugged Mini when it comes to SM2320-based PSSDs. Bridge-based PSSDs like the SanDisk Professional PRO-BLADE perform much better because of the DRAM-equipped internal SSD. The ARMOR 700 manages to fare better than the Crucial X10 Pro in this test. Interestingly, the temperatures recorded in the course of the above workload peaked at 64C (compared to 58C for the SL500, 55C for the X10 Pro, and 50C for the LaCie Rugged Mini). The absence of an explicit thermal solution proves to be a handicap here.

PCMark 10 Storage Bench - Real-World Access Traces

There are a number of storage benchmarks that can subject a device to artificial access traces by varying the mix of reads and writes, the access block sizes, and the queue depth / number of outstanding data requests. We saw results from two popular ones - ATTO, and CrystalDiskMark - in a previous section. More serious benchmarks, however, actually replicate access traces from real-world workloads to determine the suitability of a particular device for a particular workload. Real-world access traces may be used for simulating the behavior of computing activities that are limited by storage performance. Examples include booting an operating system or loading a particular game from the disk.

PCMark 10's storage bench (introduced in v2.1.2153) includes four storage benchmarks that use relevant real-world traces from popular applications and common tasks to fully test the performance of the latest modern drives:

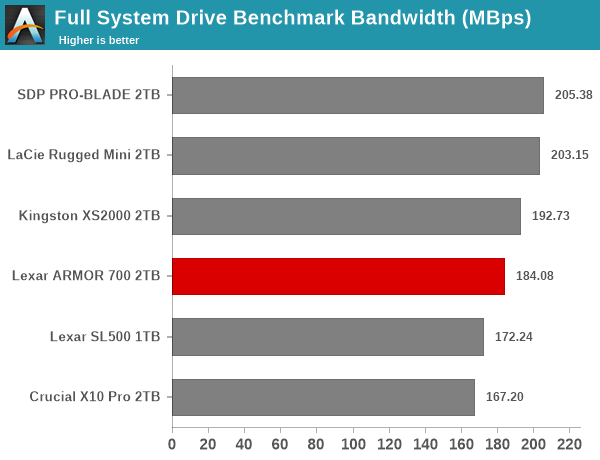

- The Full System Drive Benchmark uses a wide-ranging set of real-world traces from popular applications and common tasks to fully test the performance of the fastest modern drives. It involves a total of 204 GB of write traffic.

- The Quick System Drive Benchmark is a shorter test with a smaller set of less demanding real-world traces. It subjects the device to 23 GB of writes.

- The Data Drive Benchmark is designed to test drives that are used for storing files rather than applications. These typically include NAS drives, USB sticks, memory cards, and other external storage devices. The device is subjected to 15 GB of writes.

- The Drive Performance Consistency Test is a long-running and extremely demanding test with a heavy, continuous load for expert users. In-depth reporting shows how the performance of the drive varies under different conditions. This writes more than 23 TB of data to the drive.

Despite the data drive benchmark appearing most suitable for testing direct-attached storage, we opt to run the full system drive benchmark as part of our evaluation flow. Many of us use portable flash drives as boot drives and storage for Steam games. These types of use-cases are addressed only in the full system drive benchmark.

The Full System Drive Benchmark comprises of 23 different traces. For the purpose of presenting results, we classify them under five different categories:

- Boot: Replay of storage access trace recorded while booting Windows 10

- Creative: Replay of storage access traces recorded during the start up and usage of Adobe applications such as Acrobat, After Effects, Illustrator, Premiere Pro, Lightroom, and Photoshop.

- Office: Replay of storage access traces recorded during the usage of Microsoft Office applications such as Excel and Powerpoint.

- Gaming: Replay of storage access traces recorded during the start up of games such as Battlefield V, Call of Duty Black Ops 4, and Overwatch.

- File Transfers: Replay of storage access traces (Write-Only, Read-Write, and Read-Only) recorded during the transfer of data such as ISOs and photographs.

PCMark 10 also generates an overall score, bandwidth, and average latency number for quick comparison of different drives. The sub-sections in the rest of the page reference the access traces specified in the PCMark 10 Technical Guide.

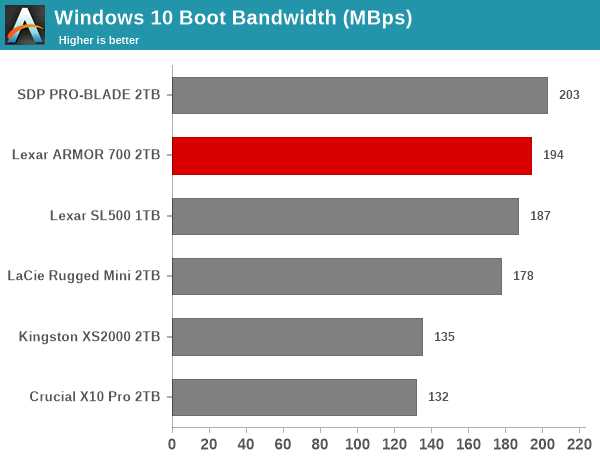

Booting Windows 10

The read-write bandwidth recorded for each drive in the boo access trace (that is the tag given in the official PCMark technical guide) is presented below.

The ARMOR 700 can't match the performance of the SanDisk Professional PRO-BLADE with its DRAM-equipped internal drive. However, among the native controller-based units, the ARMOR 700 comes out on top. Booting OSs requires good random access performance for small-sized accesses, and it is no surprise that using DRAM for FTL works better in that case.

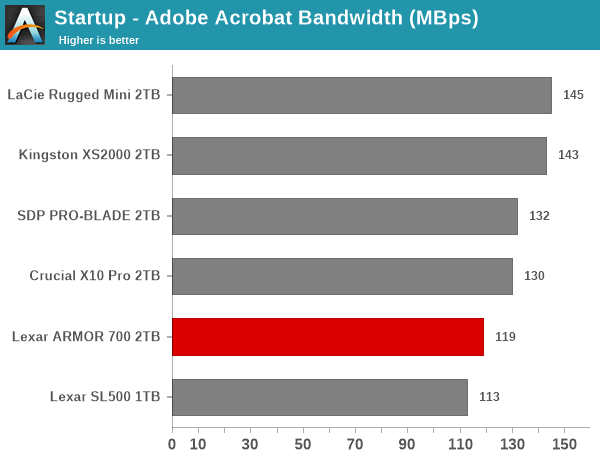

Creative Workloads

The read-write bandwidth recorded for each drive in the sacr, saft, sill, spre, slig, sps, aft, exc, ill, ind, psh, and psl access traces are presented below.

Bridge-based solutions perform better for most of the creative workloads, while the native controller solutions perform similarly. The ARMOR 700 NAND and firmware configuration is meant to deliver better performance than the SL500, and that pans out in almost all of the above workloads. While the numbers are close to each other, different drives perform well under different traffic scenarios.

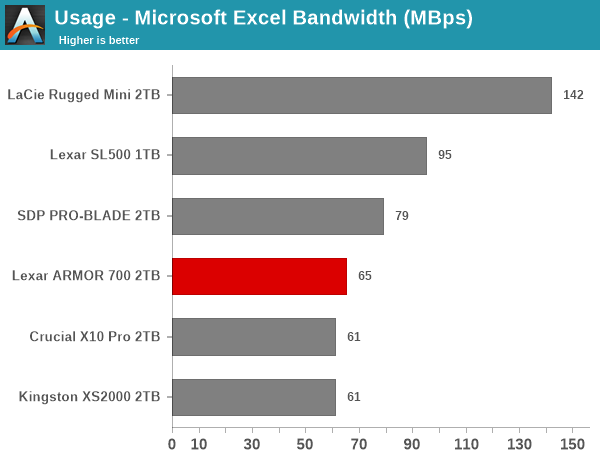

Office Workloads

The read-write bandwidth recorded for each drive in the exc and pow access traces are presented below.

Interestingly, the firmware configuration of the ARMOR 700 ends up as a detrimental factor in the performance for Office workloads, compared to the SL500. As a result, we see it in the bottom half of the pack for both trace components.

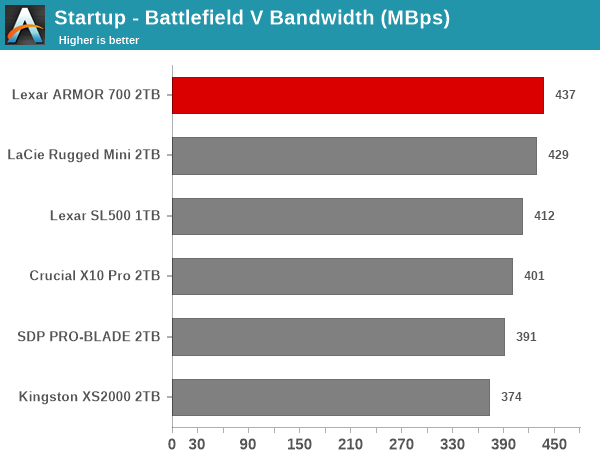

Gaming Workloads

The read-write bandwidth recorded for each drive in the bf, cod, and ow access traces are presented below.

Game startup workloads are read-heavy with a large number of sequential accesses. Under these conditions, most PSSDs in the same speed class perform similarly. The ARMOR 700 manages three different positions - top, middle of the pack, and last - in the three different trace components. On an average, end-users are unlikely to notice major differences in various game loading times across different PSSDs in the same speed class.

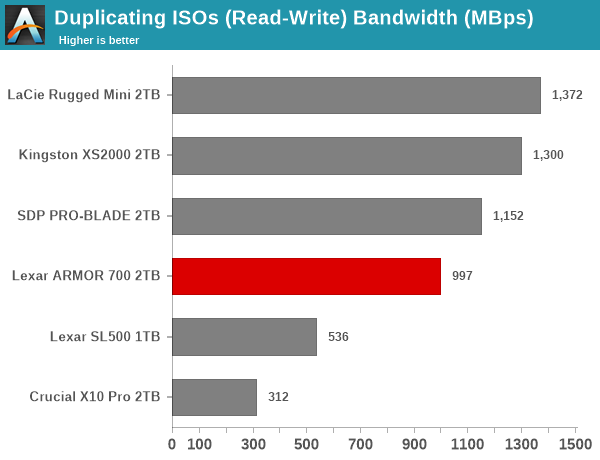

Files Transfer Workloads

The read-write bandwidth recorded for each drive in the cp1, cp2, cp3, cps1, cps2, and cps3 access traces are presented below.

The file transfer workloads evaluate the same scenario as our custom DAS suite, and we find the relative ordering of the different PSSDs to be very similar. The ARMOR 700 manages to outperform the SL500 across all trace components. Except for one mixed workload case, the ARMOR 700 also finds itself ahead of the Crucial X10 Pro.

Overall Scores

PCMark 10 reports an overall score based on the observed bandwidth and access times for the full workload set. The score, bandwidth, and average access latency for each of the drives are presented below.

The ARMOR 700 makes its mark in the lower half of the pack in the overall PCMark 10 Storage Bench scores, just ahead of the SL500. There are SM2320-based PSSDs both above and below the ARMOR 700, with the SDP PRO-BLADE being the leader (thanks to its DRAM-equipped internal SSD).

1 Comments

View All Comments

artifex - Thursday, May 30, 2024 - link

Looks like in the comparative configuration chart, the drop-down for the LaCie Rugged Mini 2TB shows a mislabeled review link claiming it's for the Crucial X10 Pro 2TB, but it does go to the proper review.