NVIDIA Unveils Updated GH200 'Grace Hopper' Superchip with HBM3e Memory, Shipping in Q2'2024

by Gavin Bonshor on August 8, 2023 2:20 PM EST

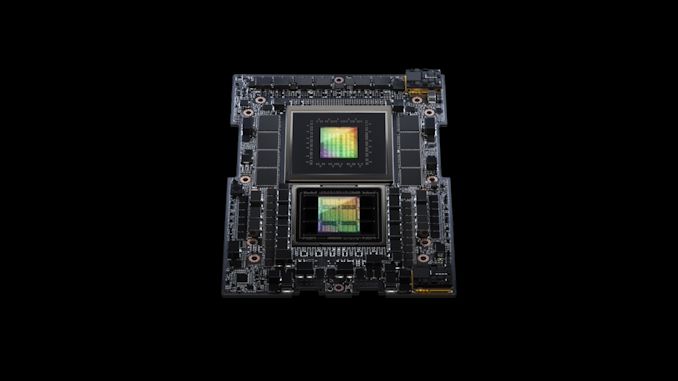

At SIGGRAPH in Los Angeles, NVIDIA unveiled a new variant of their GH200' superchip,' which is set to be the world's first GPU chip to be equipped with HBM3e memory. Designed to crunch the world's most complex generative AI workloads, the NVIDIA GH200 platform is designed to push the envelope of accelerated computing. Pooling their strengths in both the GPU space and growing efforts in the CPU space, NVIDIA is looking to deliver a semi-integrated design to conquer the highly competitive and complicated high-performance computing (HPC) market.

Although we've covered some of the finer details of NVIDIA's Grace Hopper-related announcements, including their disclosure that GH200 has entered into full production, NVIDIA's latest announcement is a new GH200 variant with HBM3e memory is coming later, in Q2 of 2024, to be exact. This is in addition to the GH200 with HBM3 already announced and is currently in production and due to land later this year. This means NVIDIA has two versions of the same product, with GH200 incorporating HBM3 incoming and GH200 with HBM3e set to come later.

| NVIDIA Grace Hopper Specifications | ||||

| Grace Hopper (GH200) w/HBM3 | Grace Hopper (GH200) w/HBM3e | |||

| CPU Cores | 72 | 72 | ||

| CPU Architecture | Arm Neoverse V2 | Arm Neoverse V2 | ||

| CPU Memory Capacity | <=480GB LPDDR5X (ECC) | <=480GB LPDDR5X (ECC) | ||

| CPU Memory Bandwidth | <=512GB/sec | <=512GB/sec | ||

| GPU SMs | 132 | 132? | ||

| GPU Tensor Cores | 528 | 528? | ||

| GPU Architecture | Hopper | Hopper | ||

| GPU Memory Capcity | 96GB (Physical) <=96GB (Available) |

144GB (Physical) 141GB (Available) |

||

| GPU Memory Bandwidth | <=4TB/sec | 5TB/sec | ||

| GPU-to-CPU Interface | 900GB/sec NVLink 4 |

900GB/sec NVLink 4 |

||

| TDP | 450W - 1000W | 450W - 1000W | ||

| Manufacturing Process | TSMC 4N | TSMC 4N | ||

| Interface | Superchip | Superchip | ||

| Available | H2'2023 | Q2'2024 | ||

During their keynote at SIGGRAPH 2023, President and CEO of NVIDIA, Jensen Huang, said, "To meet surging demand for generative AI, data centers require accelerated computing platforms with specialized needs." Jensen also went on to say, "The new GH200 Grace Hopper Superchip platform delivers this with exceptional memory technology and bandwidth to improve throughput, the ability to connect GPUs to aggregate performance without compromise, and a server design that can be easily deployed across the entire data center."

NVIDIA's GH200 GPU is set to be the world's first chip to ship with HBM3e memory, an updated version of the high-bandwidth memory with even greater bandwidth and, critically for NVIDIA, higher capacity 24GB stacks. This will allow NVIDIA to expand its local GPU memory from 96GB per GPU to 144GB (6 x 24GB stacks), a 50% increase that should be especially welcome for the AI market, where top models are massive in size and often memory capacity bound. In a dual configuration setup, it will be available with up to 282 GB of HBM3e memory, which NVIDIA states "delivers up to 3.5 x more memory capacity and 3 x more bandwidth than the current generation offering."

Perhaps one of the most notable details NVIDIA shares is that the incoming GH200 GPU with HBM3e is 'fully' compatible with the already announced NVIDIA MGX server specification, unveiled at Computex. This allows system manufacturers to have over 100 different variations of servers that can be deployed and is designed to offer a quick and cost-effective upgrade method.

NVIDIA claims that the GH200 GPU with HBM3e provides up to 50% faster memory performance than the current HBM3 memory and delivers up to 10 TB/s of bandwidth, with up to 5 TB/s per chip.

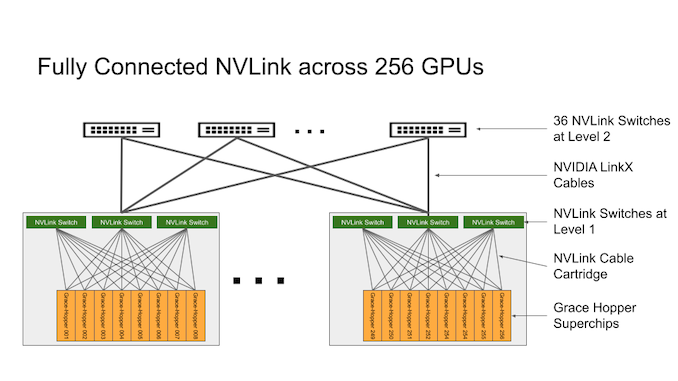

We've already covered the announced DGX GH200 AI Supercomputer built around NVIDIA's Grace Hopper platform. The DGX GH200 is a 24-rack cluster fully built on NVIDIA's architecture, with each a single DGX GH200 combining 256 chips and offering 120 TB of CPU-attached memory. These are connected using NVIDIA's NVLink, which has up to 96 local L1 switches providing immediate and instantaneous communications between GH200 blades. NVIDIA's NVLink allows the deployments to work together with a high-speed and coherent interconnect, giving the GH200 full access to CPU memory and allowing access for up to 1.2 TB of memory when in a dual configuration.

NVIDIA states that leading system manufacturers are expected to deliver GH200-based systems with HBM3e memory sometime in Q2 of 2024. It should also be noted that GH200 with HBM3 memory is currently in full production and is set to be launched by the end of this year. We expect to hear more about GH200 with HBM3e memory from NVIDIA in the coming months

Source: NVIDIA

4 Comments

View All Comments

Scabies - Tuesday, August 8, 2023 - link

> NVIDIA's GH200 GPU is set to be the world's first chip, complete with HBM3e memoryLooks like AI isn't quite there yet.

mode_13h - Tuesday, August 8, 2023 - link

Initially, I saw the "G" in front of GH200, and I thought "neat, a version with graphics units!". But, maybe it's just there because "Grace Hopper"? Lame.Regarding the 3x memory bandwidth claim, I'm guessing that's in relation to a dual-populated "superchip" module, where each chip gets 5 TB/s, yielding 10 TB/s. That figure is then compared to the H100 SXM @ 3 TB/s. So, it's more like a 67% improvement per GPU.

It annoyed me to hear that claim, because I was sure HBM3e wasn't 3x as fast as even HBM2e, which is what the slowest H100 used.

lemurbutton - Wednesday, August 9, 2023 - link

Who cares? This is not marketed towards you.Qasar - Sunday, August 13, 2023 - link

or you, as you will claim m1/m2/m3/m4 etc will beat it.besides, you wouldnt want to upset your bosses when you but something that isnt made by apple, would you ?