AMD Releases EPYC 8004 "Siena" CPUs: Zen 4c For Edge-Optimized Server Chips

by Ryan Smith on September 18, 2023 9:00 AM EST

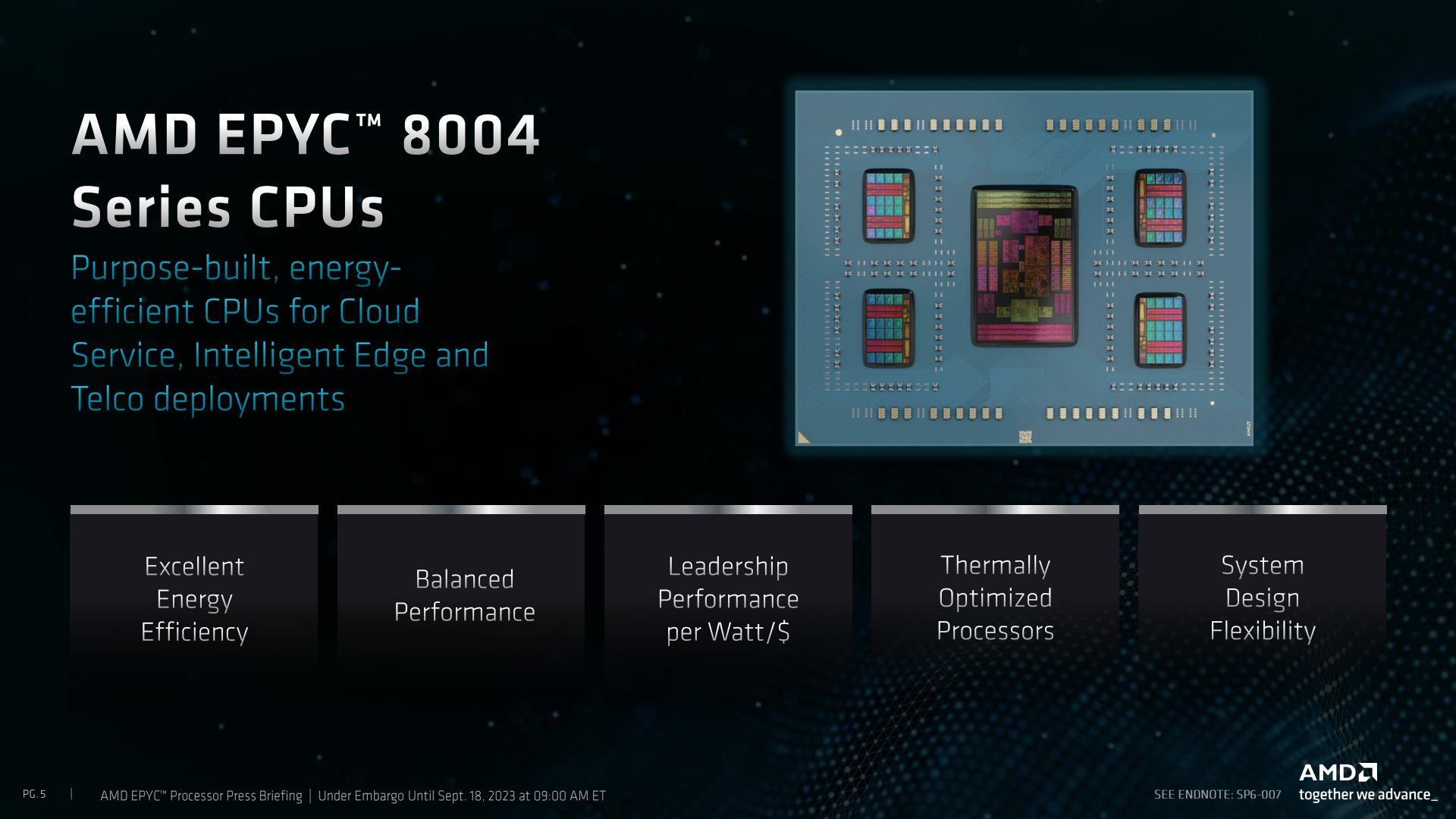

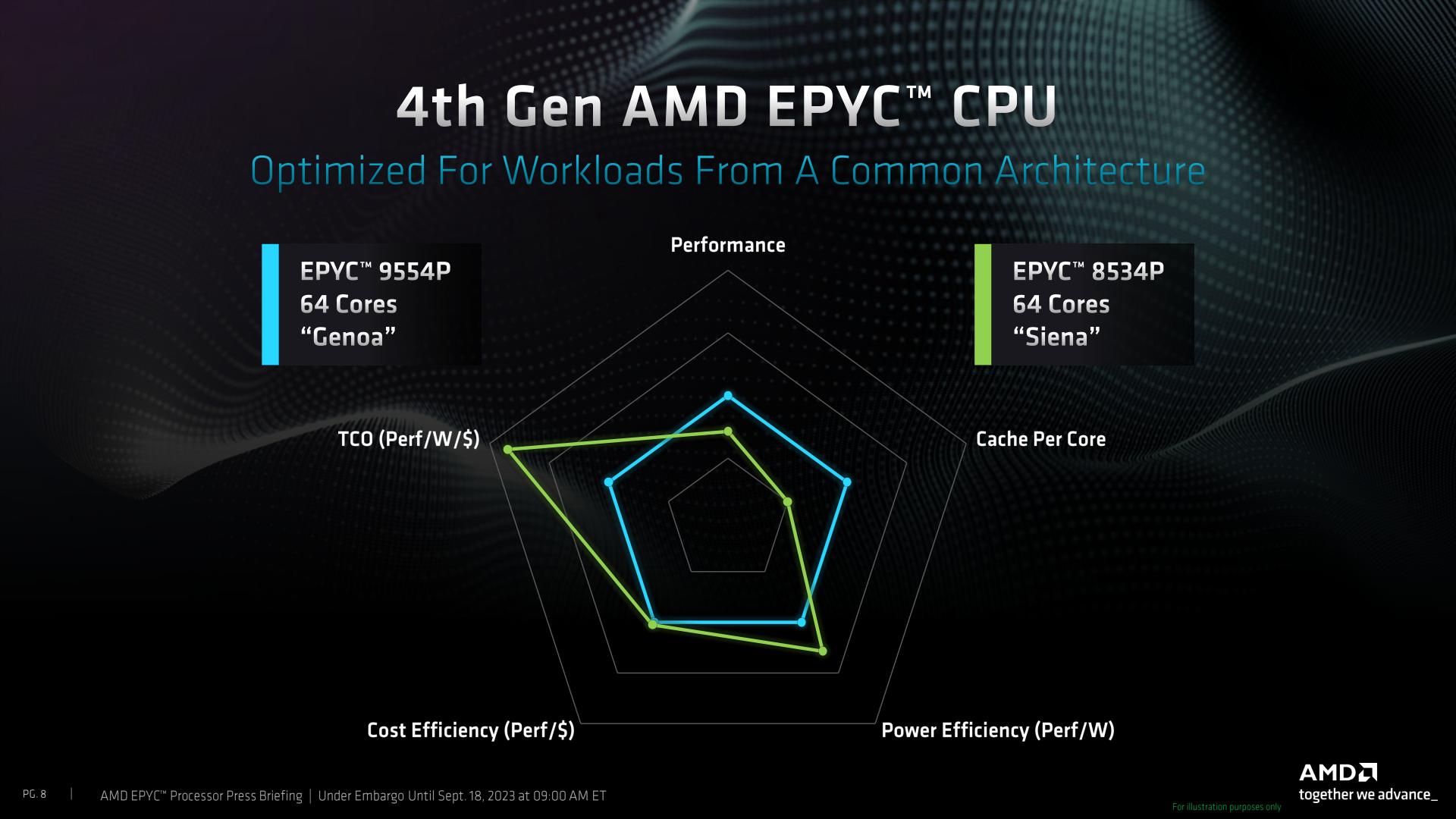

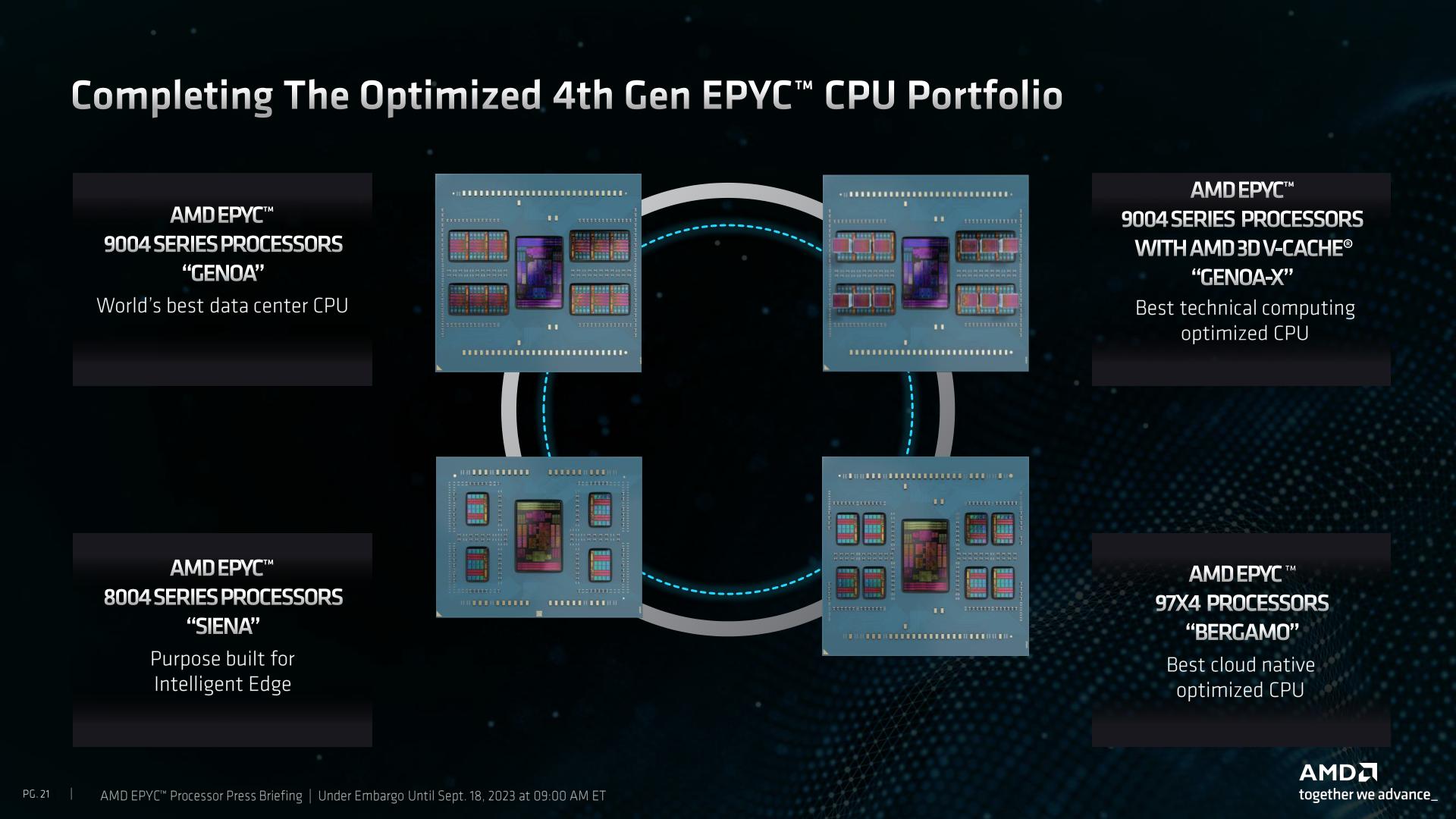

AMD this morning is releasing the fourth and final member of its 4th generation EPYC processor family, the EPYC 8004 series. Previously disclosed under the codename Siena, the EPYC 8004 series is AMD’s low-cost sub-set of EPYC CPUs, aimed at the telco, edge, and other price and efficiency-sensitive marketing segments. Based on the same Zen4c cores as Bergamo, Siena is basically Bergamo-light, using the same hardware to offer server processors with between 8 and 64 CPU cores.

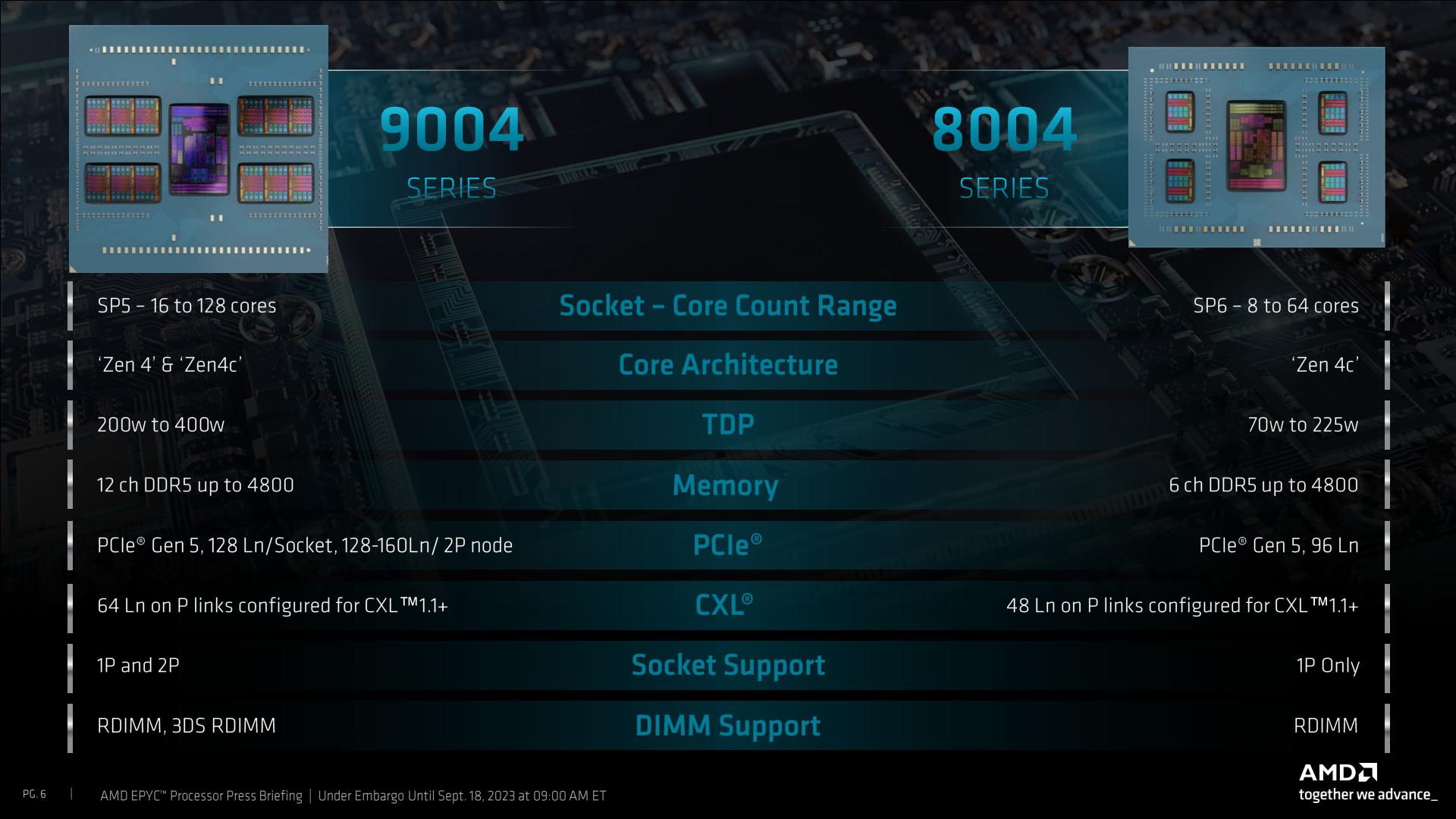

First unveiled by AMD last summer at Financial Analyst Day 2022, Siena is AMD’s first dedicated entry into the telco, networking, and edge market. Compared to AMD’s general-purpose Genoa chips (EPYC 9004), Siena offers fewer CPU cores and lower performance overall, instead optimizing the chips and platform for lower costs and better energy efficiency for use in non-datacenter environments. More broadly speaking, Siena is functionally the long-awaited low-end segment of the 4th generation EPYC stack.

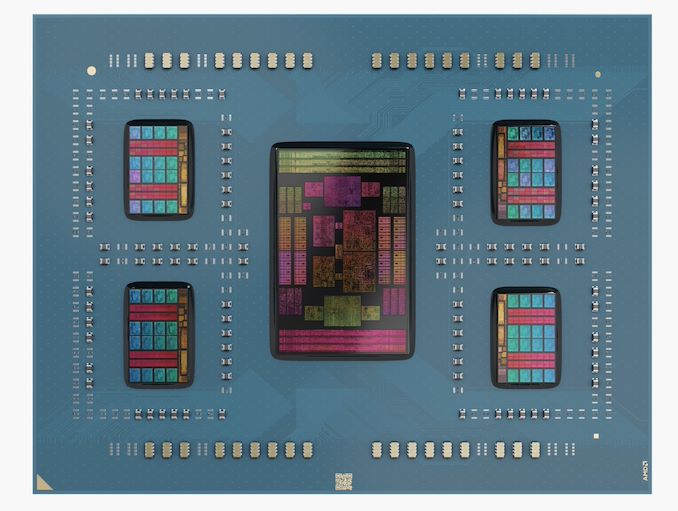

Unlike the launch of the previous three 4th generation EPYC segments – Genoa, Genoa-X, and Bergamo – the launch of Siena is a lower-key affair. Besides the reduced excitement that comes with the launch of lower-end hardware, there is, strictly speaking, no new silicon involved in this launch. Siena is comprised of the same 5nm Zen 4c core complex die (CCD) chiplets as Bergamo, which are paired with AMD’s one and only 6nm EPYC I/O Die (IOD). As a result, the EPYC 8004 family isn’t so much new hardware as it is a new configuration of existing hardware – about half of a Bergamo, give or take.

And that half Bergamo analogy isn’t just about CPU cores; it applies to the rest of the platform as well. Underscoring the entry-level nature of the Siena platform, Siena ships with fewer DDR5 memory channels and fewer I/O lanes than its faster, fancier counterpart. Siena only offers 6 channels of DDR5 memory, down from 12 channels for other EPYC parts, and 96 lanes of PCIe Gen 5 instead of 128 lanes. As a result, while Siena is still a true Zen 4 part through and through (right on down to AVX-512 support), it’s overall a noticeably lighter-weight platform than the other EPYC family members.

But even without new silicon to speak of, Siena is still bringing some hardware changes to the AMD ecosystem. For the launch of their lightweight server processor, AMD is introducing a new server socket: Socket SP6. Taking advantage of the lower number of I/O lanes and memory channels used by Siena – not to mention the smaller physical footprint of the reduced number of chiplets – socket SP6 chips are physically smaller and feature fewer LGA pads, designed to allow for suitably cheaper motherboards.

| AMD Zen 4 CPU Sockets | ||||||||

| AnandTech | Chip Dimensions (x/y) | Pin Count | PCIe 5.0 Lanes | Memory Channels | Max TDP (W) |

Max Sockets | Type | |

| SP6 | 58.5mm | 75.4mm | 4844 | 96 | 6x DDR5 | 255? | 1P | LGA |

| SP5 | 72mm | 75.4mm | 6096 | 128 | 12x DDR5 | 400 | 2P | LGA |

| AM5 | 40mm | 40mm | 1718 | 28 | 2x DDR5 | 170 | 1P | LGA |

The LGA SP6 chips measure 58.5 x 75.4 mm, down from 72 x 75.4mm for SP5, or are about 81% of the size. In terms of pin counts, we’re looking at a still sizable 4844 pins, which is still down from the 6096 pins used in SP5. Overall, it does make for a bit of an odd situation to have the only non-SP5 EPYC be the only EPYC without any new silicon in it, but we suspect this will not be the only place we see SP6 over the coming years.

Altogether, AMD is rolling out Siena with a sizable stack of 12 chips. There are essentially 6 tiers of chips, each differentiated by the number of Zen 4c CPU cores available, with each tier available in both a traditional chip or a fixed power Network Equipment-Building System (NEBS) friendly configuration, which offers a wider temperature range tolerance and is designed for deployments in less controlled conditions.

| AMD EPYC 8004 Siena Processors | ||||||||||

| AnandTech | Core/ Thread |

Base Freq |

1T Freq |

L3 Cache |

PCIe | Memory | TDP (W) |

cTDP (W) |

Price (1KU) |

|

| 8534P | 64 | 128 | 2300 | 3100 | 128MB | 96 x 5.0 | 6 x DDR5-4800 | 200 | 155-255 | $4,950 |

| 8534PN | 64 | 128 | 2000 | 3100 | 128MB | 175 | - | $5,450 | ||

| 8434P | 48 | 96 | 2500 | 3100 | 128MB | 200 | 155-225 | $2,700 | ||

| 8434PN | 48 | 96 | 2000 | 3000 | 128MB | 155 | - | $3,150 | ||

| 8324P | 32 | 64 | 2650 | 3000 | 128MB | 180 | 155-225 | $1,895 | ||

| 8324PN | 32 | 64 | 2050 | 3000 | 128MB | 130 | - | $2,125 | ||

| 8224P | 24 | 48 | 2550 | 3000 | 64MB | 160 | 155-225 | $855 | ||

| 8224PN | 24 | 48 | 2000 | 3000 | 64MB | 120 | - | $1,075 | ||

| 8124P | 16 | 32 | 2450 | 3000 | 64MB | 125 | 120-150 | $639 | ||

| 8124PN | 16 | 32 | 2000 | 3000 | 64MB | 100 | - | $790 | ||

| 8024P | 8 | 16 | 2400 | 3000 | 32MB | 90 | 70-100 | $409 | ||

| 8024PN | 8 | 16 | 2050 | 3000 | 32MB | 80 | - | $525 | ||

The flagship Siena part is the EPYC 8534P, which offers 64 Zen 4c cores using 4 Zen 4c CCDs. The default TDP on this part is 200 watts, with a cTDP range of 115W up to 225W. For all practical purposes this is half of an EPYC Bergamo 9754, offering half as many CPU cores, half as much L3 cache, half as much memory bandwidth, and depending on how to dial in the cTDP, around half the TDP. Like the Bergamo family, these chips are aimed at customers who need more CPU cores more than they need top-tier single-threaded performance, with the Zen 4c CPU cores topping out at just 3.1GHz when boosting, and running at a base clockspeed of 2.3GHz. The 8534P’s NEBS counterpart, the 8534PN, offers the same chip configuration at a 175W fixed TDP, and a lower base clockspeed of 2.0GHz, all in exchange for a wider -5C to +85C operating range.

At the other end of the spectrum is the EPYC 8024P. This part features just 8 CPU cores – AMD is using a single, half-enabled Zen 4c CCD here – with the L3 cache scaled down to that of a single CCD, all the while the full 6 channels of DDR5 memory and 96 PCIe lanes remain. This 8 core part has a base TDP of 90W, though that can be adjusted to be between 70W and 100W.

Otherwise, since AMD is using the same IOD as their other EPYC parts, many of the limitations and trade-offs with those parts remain. The highest supported memory speed is DDR5-4800, which can only be hit with 1 DPC. And not all of the PCie lanes can be switched to CXL mode – in this case, just 48 lanes. Reflecting the lower-cost nature of the Siena platform, 3DS RDIMMs are not supported here, meaning that the platform’s maximum memory capacity is 12x96GB (1.152TB) of DDR5 RDIMMs.

At first blush, I was surprised to see that AMD kept the same sizable EPYC IOD for their budget server part. But AMD has had nearly a year to stockpile defective IODs for salvage purposes. Arguably, a smaller IOD would be superior from an energy efficiency standpoint, but that is counterbalanced by the fact that AMD would need to spend the time taping out a new IOD for what is meant to be a cheap part that benefits from silicon reuse. I would be a bit more surprised if AMD doesn’t do a smaller IOD for future generations of chips in this product segment, but as has been the case since the start of AMD’s EPYC journey, they are taking things slowly and steadily here as they expand their server CPU offerings.

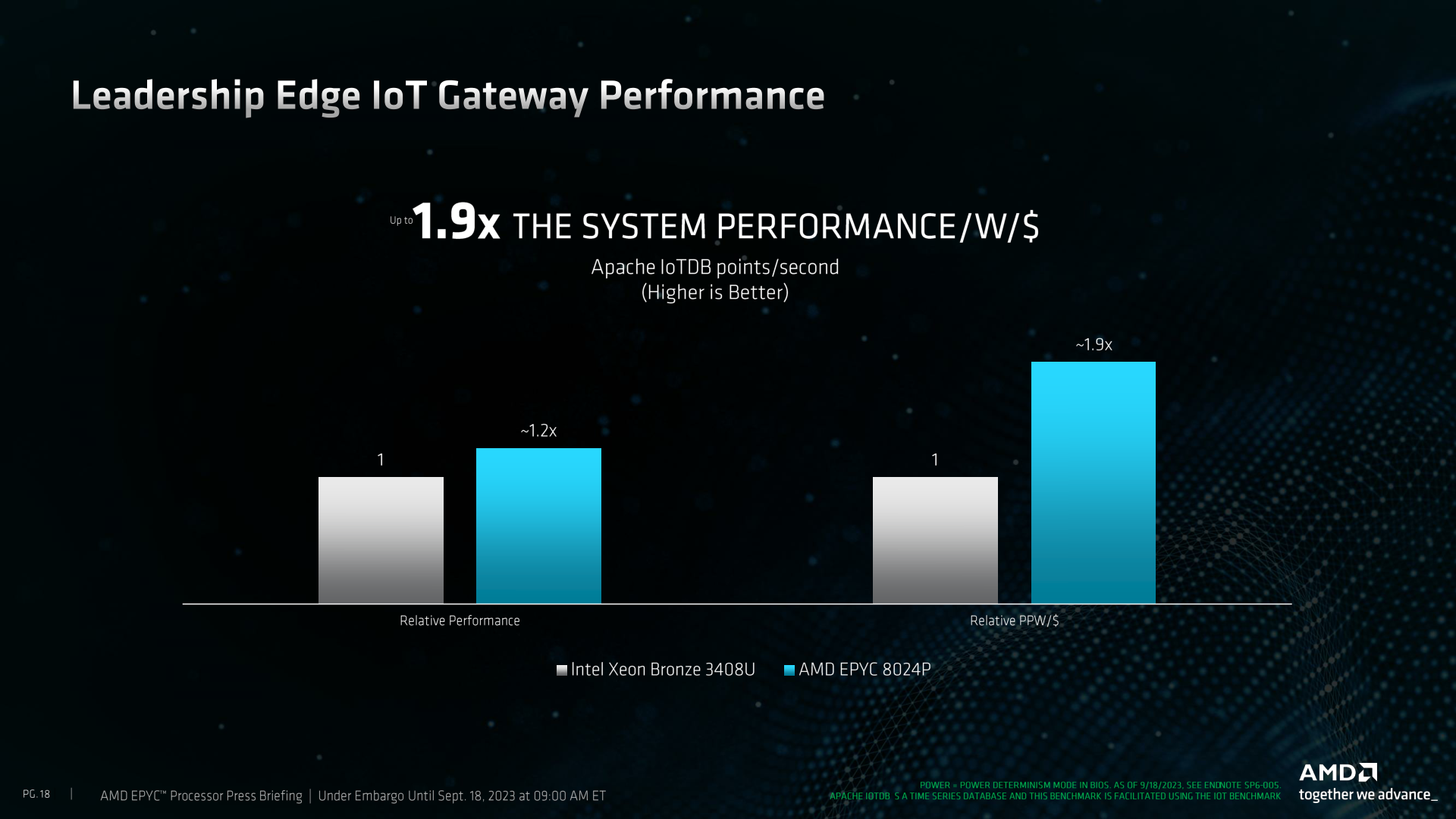

In any case, with the Siena/EPYC 8004 lineup, AMD is looking to drive home the cost argument for these chips, especially compared to Intel’s 4th generation Xeon (Sapphire Rapids) lineup. Since there’s no new silicon here, Siena doesn’t significantly change any of the calculus there, but Intel’s focus on accelerators versus AMD’s focus on all-out CPU performance means that AMD can bring a lot of CPU cores to bear even in cheaper configurations. Which, in workloads that can’t take advantage of Intel’s accelerators, AMD believes gives them a significant edge.

As always, vendor numbers should be taken with a grain of salt. But AMD is reasonably confident in the ability of their chips to hold the lead in perf-per-watt, especially when compared to a Xeon with a similar number of cores.

Wrapping things up, according to AMD, Siena chips are available now. The list prices range from $5,450 down to $409 in 1,000 unit quantities – while AMD’s bigger partners normally get better deals than that. Speaking of which, many of the usual suspects in the server space are launching new platforms in the coming weeks based on Siena, with Dell, Lenovo, and Supermicro all showing off edge-optimized systems.

5 Comments

View All Comments

ballsystemlord - Monday, September 18, 2023 - link

@Ryan the 8534PN is listed as having both 128 cores and 128 threads and the 8024P 's pricing is missing the dollar sign. Thanks for your articles.Ryan Smith - Monday, September 18, 2023 - link

Thanks!phoenix_rizzen - Monday, September 18, 2023 - link

We use 16 core EPYC CPUs in tower servers in our schools (although we are moving to 2U rackmount systems where we have space for a proper rack). Will be interesting to see how the pricing compares for 8004-based systems.7313 vs 7343 vs 8124 vs 9124

lemurbutton - Tuesday, September 19, 2023 - link

AMD needs to stop comparing Epyc to Xeon. They need to compare to Ampere. When they do, this CPU looks a lot worse.Hul8 - Tuesday, September 19, 2023 - link

> Otherwise, since AMD is using the same IOD as their other EPYC parts, many of the limitations and trade-offs with those parts remain. The highest supported memory speed is DDR5-4800, which can only be hit with 1 DPC.Wendell of Level1Techs recently tested a new Tyan server with an EPYC 9554 (so Genoa, not Siena). When updated with the latest BIOS and AGESA the system worked flawlessly with 2 DPC DDR5-4800.

2 DPC capabilities may be down to motherboard and IOD quality plus what the system manufacturer is confident allowing thru the firmware.

While Siena is unlikely to get the best IO dies, there will be some EPYC 9000 systems at least where the stated 1 DPC limitation no longer applies.