AMD Widens Availability of Ryzen AI Software For Developers, XDNA 2 Coming With Strix Point in 2024

by Gavin Bonshor on December 6, 2023 3:00 PM EST

Further to the announcement that AMD is refreshing their Phoenix-based 7040HS series for mobiles with the newer 'Hawk Point' 8040HS family for 2024, AMD is set to drive more development for AI within the PC market. Designed to provide a more holistic end-user experience for adopters of hardware with the Ryzen AI NPU, AMD has made its latest version of the Ryzen AI Software available to the broader ecosystem. This is designed to allow software developers to deploy machine learning models into their software to deliver more comprehensive features in tandem with their Ryzen AI NPU and Microsoft Windows 11.

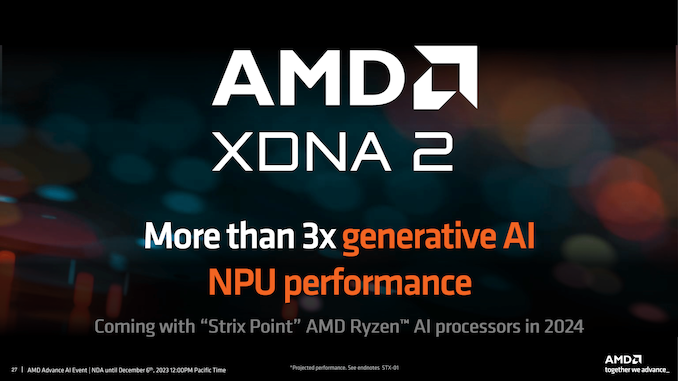

AMD has also officially announced the successor to their first generation of the Ryzen AI (XDNA), which is currently in AMD's Ryzen 7040HS mobile series and is driving the refreshed Hawk Point Ryzen 8040HS series. Promising more than 3x the generative AI performance of the first generation XDNA NPU, XDNA 2 is set to launch alongside AMD's next-generation APUs, codenamed Strix Point, sometime in 2024.

AMD Ryzen AI Software: Version 1.0 Now Widely Available to Developers

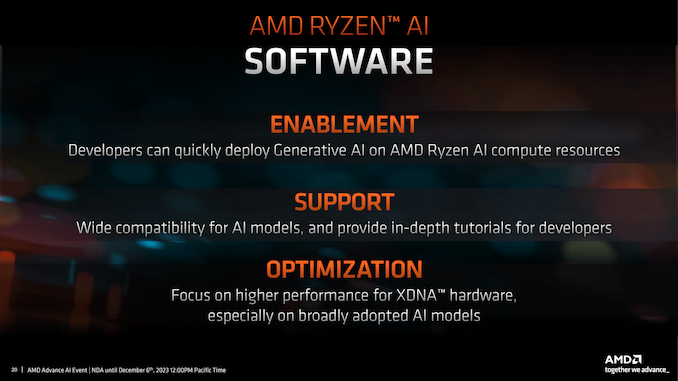

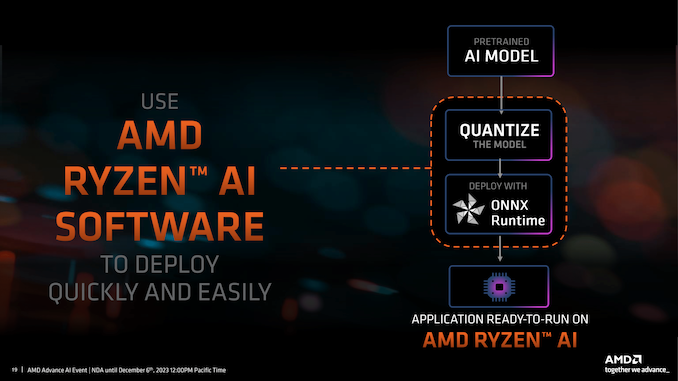

Along with the most recent release of their Ryzen AI software (Version 1.0), AMD is making it more widely available to developers. This is designed to allow software engineers and developers the tools and capabilities to create new features and software optimizations designed to use the power of generative AI and large language models (LLMs). New to Version 1.0 of the Ryzen AI software is support for the open-source ONNX Runtime machine learning accelerator, which includes support for mixed precision quantization, including UINT16/32, INT16/32, and FLOAT16 floating point formats.

AMD Ryzen AI Version 1.0 also supports PyTorch and TensorFlow 2.11 and 2.12, which broadens the capabilities on which software developers can run in terms of models and LLMs to create new and innovative features for software. AMD's collaboration with Hugging Face also offers a pre-optimized model zoo, a strategy designed to reduce the time and effort required by developers to get AI models up and running. This also makes the technology more accessible to a broader range of developers right from the outset.

AMD's focus isn't just on providing the hardware capabilities through the XDNA-based NPU but on allowing developers to exploit these features to their fullest. The Ryzen AI software is designed to facilitate the development of advanced AI applications, such as gesture recognition, biometric authentication, and other accessibility features, including camera backgrounds.

Offering early access support for models like Whisper and LLMs, including OPT and Llama-2, indicates AMD's growing commitment to giving developers as many tools as possible. These tools are pivotal for building natural language speech interfaces and unlocking other Natural Language Processing (NLP) capabilities, which are increasingly becoming integral to modern applications.

One of the key benefits of the Ryzen AI Software is that it allows software running these AI models to offload AI workloads onto the Neural Processing Unit (NPU) in Ryzen AI-powered laptops. The idea behind the Ryzen AI NPU is that users running software utilizing these workloads via the Ryzen AI NPU can benefit from better power efficiency rather than using the Zen 4 cores, which should help improve overall battery life.

A complete list of the Ryzen AI Software Version 1.0 changes can be found here.

AMD XDNA 2: More Generative AI Performance, Coming With Strix Point in 2024

Further to all the refinements and developments of the Ryzen AI NPU block used in the current Ryzen 7040 mobile and the upcoming Ryzen 8040 mobile chips is the announcement of the successor. AMD has announced their XDNA 2 NPU, designed to succeed the current Ryzen AI (XDNA) NPU and boost on-chip AI inferencing performance in 2024 and beyond. It's worth highlighting that XDNA is a dedicated AI accelerator block integrated into the silicon, which came about through AMD's acquisition of Xilinx in 2022, which developed Ryzen AI and is driving AMD's commitment to AI in the mobile space.

While AMD hasn't provided any technical details yet about XDNA 2, AMD claims more than 3x the generative AI performance with XDNA 2 compared to XDNA, currently used in the Ryzen 7040 series. It must be noted that these gains to generative AI performance are currently estimated by AMD engineering staff and aren't a guarantee of the final performance.

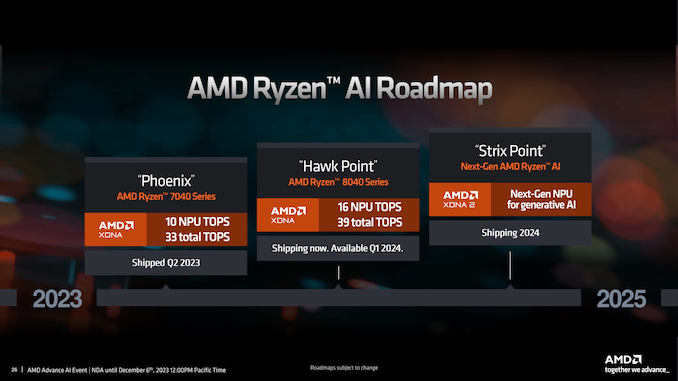

Looking at AMD's current Ryzen AI roadmap from 2023 (Ryzen 7040 series) to 2025, we can see that the next generation XDNA 2 NPU is coming in the form of Strix Point-based APUs. Although details on AMD's upcoming Strix Point processors are slim, we now know that AMD's XDNA 2-based NPU and Strix Point will start shipping sometime in 2024, which could point to a general release towards the second half of 2024 or the beginning of 2025. We expect AMD to start detailing their XDNA 2 AI NPU sometime next year.

20 Comments

View All Comments

bernstein - Wednesday, December 6, 2023 - link

So whats the point of all those tops on an end-user pc? outside of apple there really is no software that does any LLM stuff on device, its all done in the cloud…meacupla - Thursday, December 7, 2023 - link

Microsoft wants to push copilot, and is asking for CPUs/APUs to have something like 8000 copilot AI points. And 8000 copilot points (I don't remember the exact number or units) can't quite be achieved with a 12CU RDNA3 GPU alone.mode_13h - Thursday, December 7, 2023 - link

In its current form, Ryzen AI isn't faster than the GPU. If you look at the slide entitled AMD Ryzen AI Roadmap, it shows that Hawk Point's Ryzen AI will only provide 16 of the SoC's 39 total TOPS.Ryzen AI isn't about adding horsepower, but rather about extending battery life when using AI.

nandnandnand - Saturday, December 9, 2023 - link

It looks like Strix Point XDNA will be faster and more efficient than the iGPU, which is interesting.mode_13h - Monday, December 11, 2023 - link

Yeah, as long as the AI engine's clocks stay in the sweet spot and the GPU doesn't get a lot more powerful.45 TOPS is a big number, though. To put it in perspective, a 75 W Nvidia GTX 1050 Ti could only manage about 8 TOPS, and that was even using its dp4a (8-bit dot-product + accumulate) instruction.

...however, AI is bandwidth-hungry and the GTX 1050 Ti had over 100 GB/s of memory bandwidth, so I'm not sure how much it makes sense to scale up compute without increasing bandwidth to match. A little on-chip SRAM only gets you so far...

nandnandnand - Monday, December 11, 2023 - link

Hawk Point will be an interesting test case. The silicon should not have changed, so they can only get 40-60% more performance by increasing the clock speeds. I think it was no more than 1.25 GHz before, so they may have ramped it up to exactly 2 GHz (60% higher). Source: https://chipsandcheese.com/2023/09/16/hot-chips-20...XDNA 2.0 means business and must use improved or larger silicon. Also, if Strix Halo rumors are accurate, Strix Point and Halo will have the same 45-50 TOPS performance, but Halo gets a doubled memory bus width.

mode_13h - Tuesday, December 12, 2023 - link

I hadn't heard about that memory bus. So, I guess AMD is joining the on-package LPDDR5X crowd?nandnandnand - Monday, December 25, 2023 - link

Very likely, but I'd expect to see it used with the new CAMM standard too.Strix Halo is for laptops first, mini PCs second. We'll see how upgradeable the memory is.

Threska - Thursday, December 7, 2023 - link

Well AI is far more than LLMs. It's currently being done with compute shaders.mode_13h - Thursday, December 7, 2023 - link

> outside of apple there really is no software that does any LLM stuff on deviceLLMs only gained such public attention about 1 year ago, whereas Ryzen AI launched in Phoenix, back in Q2. It obviously wasn't designed for LLMs, especially given that it's essentially some IP Xilinx already had from before their acquisition by AMD.

What AMD said about Ryzen AI at the time of launch is that it was designed for realtime AI workloads, like audio & video processing for video conferencing. Intel actually had a more extensive set of example uses, in their presentations pitching the new VPU in Meteor Lake.